The first human genome project took 12 years, technology can now do it in 3 days

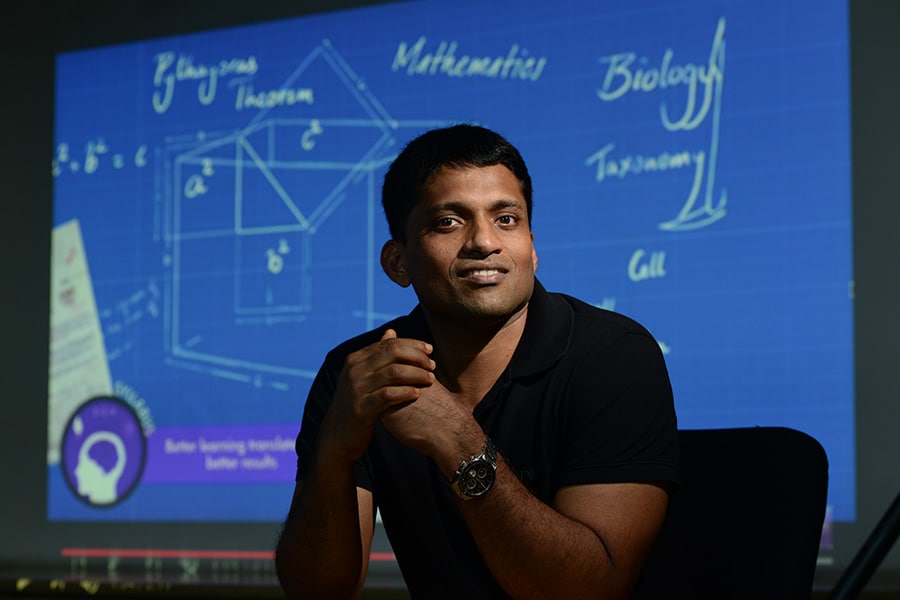

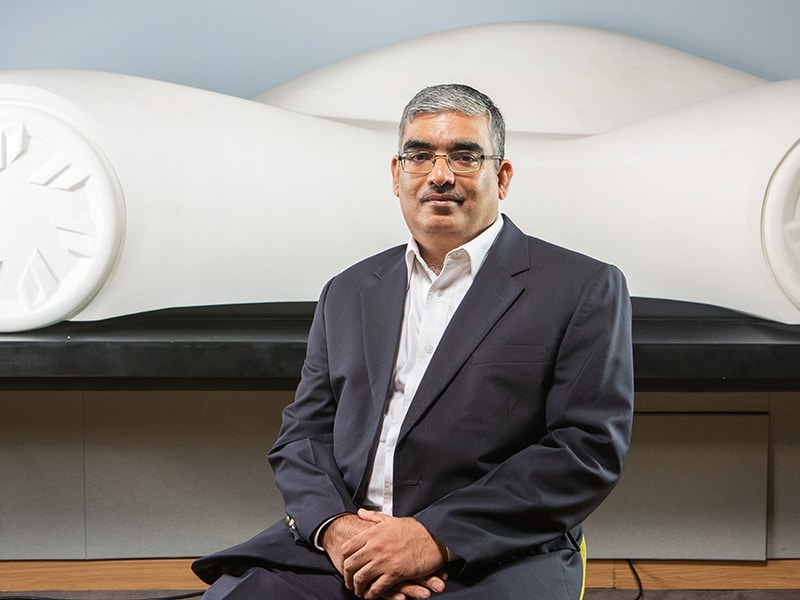

Sam Santhosh, Chairman & CEO, MedGenome[br]

Sam Santhosh, Chairman & CEO, MedGenome[br]In less than eight years, Bengaluru-headquartered MedGenome has emerged as market leader in genomic diagnostics. To enable this, it works with a network of more than 550 hospitals and 5,500 clinicians to carry out diagnostic tests. This has propelled MedGenome’s clinical databases of genetic variants from India. Its data models and solutions now help pharmaceutical and biotech companies innovate faster, with targeted therapy. To find out how, Forbes India spoke with Sam Santhosh, Chairman & CEO, MedGenome.

You have spent two decades in software product engineering. What drew you to genetics?

Around mid-2000, the Human Genome Project was completed. That was the first time the genome of a human being was sequenced, and attracted a lot of publicity. At the time, I wondered what is happening and what could be its impact? So it got me interested in the subject, and then it was like the start of a long journey.

Did your experience in product engineering play a role, as an intersection of genetics and technology?

Very much so. Because although I was in product engineering, the main parts we were focusing on was system-level programming. So we handled a lot of source code, whether it was the operating system, device drivers or filters—things like that. I was always amazed at the power of the source code. When the genome was sequenced, it felt similar: you’re actually getting the source code of life. And now we were getting the power—just like we would while doing another program—to analyse and understand the source code of not only humans, but any living organism.

What’s the technology apparatus needed for genetic sequencing?

Basically, genetic sequencing has two parts. The first is the wet lab, where you go through a number of steps to take the DNA out of a material, whether it is blood or tissue. Then we magnify the DNA and put it into a sequencing machine. This converts the biological material to get the source code.

The source code is basically four letters—ATCG—in this case, four chemicals (adenine, thymine, cytosine and guanine), which makes up the DNA. That is one part of the process, for which we need expensive machinery and a high quality lab to avoid contamination, and so on. But that is just half the story.

The other half is the investment and infrastructure needed to analyse this data because though it’s four letters, human being’s genetic code runs into terabytes because of the repeat of these letters. We need to get the data out and do the quality check because the DNA is cut into many small pieces before they are sequenced. That is the technology that came out, so stitching it back and trying to get the order of the letters becomes tough. This means we need to have a lot of quality checks. Once you get that part of the data, we need a lot more tools to figure out what it means. This is the second part of genetic sequencing. Together, it needs a lot of money, as well as time and knowledge.

How did India become relevant to the global ecosystem of genetic sequencing?

India, unfortunately, was out of the first part of this whole revolution. India had a strange rule which didn’t allow samples to go out of the country for research. So, unlike other projects like rice, where India has been part of the global consortium, we were not part of the human genome project. So, India has been sort of a late entrant. But then we realised that the Indian population is much more unique and valuable than most of the global population. So, there is a lot of potential for genomics to grow from India.

Has the law evolved or does that continue to be the case?

The law has eased out, but the situation is not that critical now because companies like us have labs here. So, samples don’t need to go out of the country, unlike what it was 15 or 20 years ago. So you can do the genetic sequencing here.

Why wasn’t it possible to apply analytics in genetics on such a scale 10 or 15 years ago?

First of all, sequencing the DNA is only about 30 or 40 years old. And that is through an older method called Sanger Sequencing. Dr Frederick Sanger, who is a two-time Nobel Prize winner, was instrumental in making the technology for sequencing the DNA. It was a very good, solid method, but very slow. You could only look at parts of the genome—sequencing one or two genes would take years. But the first Human Genome project was done using that technology. It took 12 years, with eight or nine countries participating. It was huge! It took $3 billion to sequence one genome. That technology was great, but was very slow and could do very little. Maybe, a person’s PhD program would involve studying a gene and sequencing.

From early 2000 onwards, partly because of the hype created by the Human Genome Project and also because of the convergence of many technologies—optics being one, chemistry being second, computers being third—enabled new developments. Now, we could cut the DNA into small, small pieces and then use powerful optics technologies to use light, and read between these four bases in a high throughput manner. That is the key part.

Splitting the DNA into millions of pieces, reading them in a high throughput manner, and having the software power to stitch it back. These were the new technologies that came up, but it was not overnight. It took six or seven years for this set of new technologies to become standardised. Nowadays, it is called ‘NGS - Next Generation Sequencing,’ which involves a set of technologies. Illumina became the leading company, bought a couple of other companies, and gave the platform that would enable this. Even now they have about 90 percent of the market, and they were the pioneers in making this practically available, bring a machine to the desktop. In effect, what once took 12 years for the first human genome, we can now do in our lab in three days—and at less than $1,000! So that is the power of what’s happened. That is why we can do now what nobody could dream of 15 years ago.

Could you outline the business itself—of MedGenome? Who does the company serve?

Medgenome has two lines of business. One line is our basic core: the diagnostic services that we provide here. As sequencing technologies emerged, it also enabled a set of new tests or new diagnostics. These new diagnostics don’t replace old tests, but enlarged the market with a set of tests across many domains that can shed more light on why someone got a disease: was a gene responsible for that disease? So, that is a new set of findings, which has enabled our genetic diagnostics business. We were the pioneers in India of genetic-testing by using DNA sequencing. That is one part of the business, which has grown well.

As this business grew, we started getting projects from pharmaceutical companies which, like I said earlier, could not get access to the Indian samples because it could not go out of the country. Now with our lab here, they were more than happy to give us projects where we could sequence, and then share insights of the data with them on a collaborative basis. The data does not go out. We keep the data, but we allow them to study that and get insights from it. So, that’s the second line of business which is mainly funded by pharma companies, as well as academic labs, both in India and across the world. [Leading academic labs have projects and get grants.] So, these are the two revenue streams of Medgenome.

Lately, in the last couple of years, we have been able to move more into licensing as well. So we have a third revenue stream: we provide tools and the ability to analyse the data. In the past three years, more than half our revenue comes from diagnostics. Most of the other half comes from research services, and the remaining revenue comes from licensing.

Let’s talk about information security. Considering the nature of data that Medgenome works on, what is the security apparatus required?

There is nothing unique or specific that we can do. Most businesses nowadays work on data, if you look at banks and other sectors. We follow all procedures that are standard, like encryption and firewalls. But genetic data is very complex. It is not as simple as stealing a credit card number or type of a bank fraud. Generic data runs into terabytes. People spend years trying to make sense of such data. It is not easily malleable or even easily accessible. You can’t just break in and get the data. You will have to get access for days or weeks to download any useful data.

Secondly, genetic data is very valuable. But genetic data alone does not make much sense. You also need to have the person’s clinical details and other information, and co-relate all that to make sense. These are not kept together. So, it is practically very difficult to steal genetic data unless somebody actually downloads all of this onto a drive and transports them.

But surely, the compliance and regulatory guidelines would be stringent in genetics, given that it is patient information?

What you’re talking about is privacy rules. Indian regulations are still a little behind. But most companies like us follow the strict regulations laid out by American and other western countries to protect personal data. MedGenome is a CAP-accredited lab. CAP [College of American Pathologists] is the international standard for how a lab should be run and how data should be stored. There are very clear guidelines, which we maintain and follow.

How have the paths changed from MedGenome’s original vision seven years ago?

The vision sort of evolved. When we started, none of us realised the value of Indian data. Our initial vision was just to make a diagnostics company, and use new technologies to provide affordable tests to the local population. Because the few people who were doing tests were sending it out to the US, which was prohibitively expensive. Some of these tests were not even available here. That was the first goal, and we were happy that we were able to do that pretty quickly. In the past six years, we must have done more than 150,000 samples of patients—covered across various tests. That is a good growth path we were able to get.

After the first couple of years, we realised mainly from our customers that the data is also very valuable—not just the fact that it is genetic data, but the fact that the data is of the Indian population, which is very ancient, and had split into groups a few thousand years ago and, more importantly, stuck to those groups. The population would not interbreed, inter-marry until recently. So that created what is known in science as ‘population isolates’.

This also increases ‘Founder Effects’ because many in these populations were created by small groups of people moving away from the main population for whatever reasons—maybe they were discriminated, maybe they were looking for more opportunities, maybe they moved apart and then would only marry among themselves. So that is not very common globally. There are a few pockets, like the Ashkenazi Jews in the US. Or in Iceland, because it became an island, people interbred. India has about 4,000 isolates, so that became a very unique database to find new drug targets. Then, our vision expanded. We realised we can provide affordable diagnostics to patients in India, and create a large genetic pool that can help find new drugs.

While we talked about population groups, all humans share the same DNA. The basic genetic code is the same. So what you find in the Indian population is applicable to everybody. It’s just that the data is very complex and huge, and finding the nuggets of information is very difficult. With the smaller population groups, you are able to cut the noise and get to the signal. Finding that insight is applicable to everybody. So our vision expanded, and we realised we can find new drug targets. That’s why we’ve started the licensing business. Our goal is we will help develop new drugs based on findings from India.

Has it translated into precision medicine, and so targeted therapy? Have we reached that point?

Absolutely. But not for everybody or in every situation. So many of our diagnostic tests enable precision medicine. Precision medicine means you are able to target a particular drug to a particular patient, compared to just giving a drug without knowing. There are instances, but not everywhere. But cancer has been one area where a lot of progress has been made. So now there are many drugs available, which doesn’t take into consideration what type of cancer you have, but rather your genetic makeup. Earlier, most of the treatment would be based on whether it is breast cancer or head cancer. While actually that is not the way to categorise, it is driven by what genetic mutation the cancer is being caused by.

So the same drug is applicable to a patient, irrespective of which part of the body he or she has the cancer, but the right genetic mutation driving the cancer. But again, this is not true for all cancers. Now about 30 percent of the cancers, like lung cancer or breast cancer have very targeted therapies, with targeted medicines that are applicable and actually cure the cancer if that gene was causing the cancer. So, that way progress is being made in many places. We are also a part of the journey. Enabling patients to do these tests and find out if they have the mutation or not.

Given the expanse of the clinical database, how do you harness something like machine learning or AI which will come handy for faster detection?

The quantity of data is huge—as are the patterns. The genetic data is ideal for many applications of AI and machine learning. So we use it practically on a daily basis. We don’t talk about it much because it’s part of our daily life. But it is used at multiple levels. A lot of tools are needed for preparing genetic reports, especially when a lot of genes are sequenced. Studying or analysing data of one or two genes isn’t much of a challenge. But if 20,000 genes are looked at, you have a lot of data. It becomes practically impossible for normal tools to figure out. So, there is a lot of scope for automation and machine learning in diagnostics.

Similarly on the research side, we have developed a lot of tools. We have spent a lot of time in cancer, for which we have developed tools to look at new epitopes. In this area (predicting new epitopes), we are using a lot of AI and have a lot of patents.

What’s the road ahead for genetic sequencing? What do you see as the future of Medgenome?

We are at a rapid growth stage. Though the growth has been good until now, it’s still just the tip of the iceberg. There are two or three big opportunities remaining to be explored. One is for the diagnostics tests to become more affordable. The prices have come down a lot—like the prices in India are only a fifth of what it is priced abroad. But it can still go down, which will happen as volumes increase and new technologies come.

So the existing market will grow. But more importantly, many of the new tests are being developed partly because of the new findings and the technology getting better. Recently, we developed and launched a test for tuberculosis by sequencing the whole tuberculosis bacteria genome. These things were not practically possible earlier, and it was done directly from the saliva. So you don’t have to wait for a culture. In effect, what took six to eight weeks, we can now do in three days. Similarly, in cancer, liquid biopsy is coming up, i.e. just by taking the patient’s blood, you can look at the floating tumour cells and find out what cancer he has. So, the opportunities are becoming much bigger. We see genomics practically getting used in a daily manner and its applications having tremendous impact, as geneticist Eric Topol says, from “womb to tomb”…You will be using genomics in your whole lifecycle.

.jpg)

.jpg)